Securing microservices in the Enterprise

I've had a couple of really interesting conversations over the past few months about the complexities of deploying microservices in typical large enterprises, so decided to take some time to pull my thoughts together.

Before we start however, I want to ground some terminology:

- Microservice: Everyone loves a good buzzword, but I actually mean any service, in particular ones that scale horizontally and require network communication. "Micro" just usually means an exponential increase in RPC calls.

- Enterprise: Any organisation that has a typically intense use of firewalls to manage communications between their services. I see this a lot in enterprises, not so much in small start ups knocking out cloud native applications.

Interestingly, the problem I'm going to discuss is one that is exacerbated by microservices, not a product of.

As usual, I apologise in advance for my terrible drawing skills. But a picture, even drawn as poorly as mine, says 1000 words.

The problem

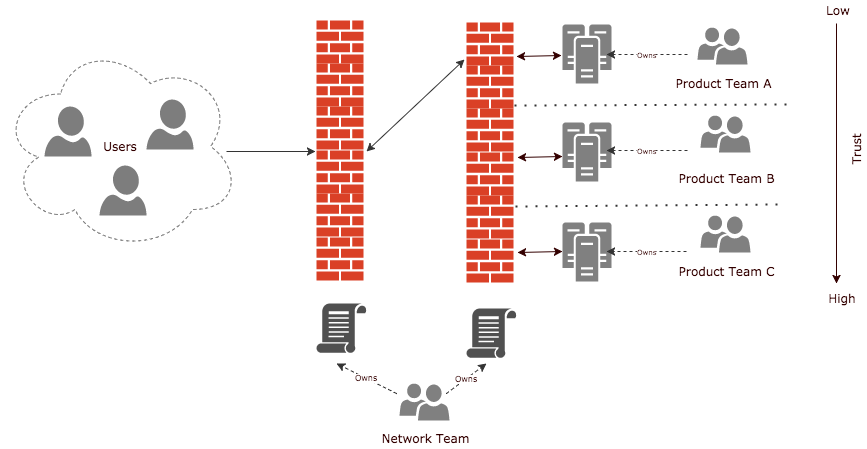

I'm sure you've seen (over simplified) setups like this before:

In principal, what we have is:

- An external firewall, permitting those scary people a little hole into our internal world.

- An internal firewall(s), which manages communication between services, or groups of services, managed by a network team.

- Services managed by individual product teams.

What we end up with is the internal firewall policy being this behemoth set of rules, that only the network team understands (actually, most of the time they don't, because it's just too complex).

That centralisation is a productivity killer too, what happens if:

- Product Team A wants to scale their services dynamically with load?

- Product Team B decides their service is no longer needed?

- A new Product Team comes along and wants to expose a new service?

- Product Team C decides they need to talk to Product Team A?

In all of these scenarios, there is a handoff to the Network Team. Lets play through the fourth scenario:

"C" asks "A" for access to their service. "A" agrees, grants "C" Authentication credentials, linked to the relevant Authorisation.

However, C still cannot talk to A, because we need the Network team to also grant access to A. The network team will probably go back to A, just to be sure that it's OK, even though they've already said its OK. Some times they'll get it wrong too and we end up going back and forth until we get it right. But why?

Surely the people who say a service can talk to A, are A, and they should be empowered as a cross functional product team to do so?

It also doesn't scale. Taking Uber as an example. Some 8000 repos, he doesn't know how many services because it changes that much. Imagine the size and complexity of their firewall policy if it was done this way? Not to mention the defect rate.

The problem with scaling isn't just about complexity either, typical microservice architectures result in significant amount of RPC calls, much much higher than your monolith systems. This puts significant load onto your firewalls which can carry an almightly cost.

The standard response

What I hear a lot at this point is that the centralised firewall policy isn't the problem, it's the organisational structure. The teams need network engineers embedded in them in order to reduce handoffs and friction.

Another response is to make the firewall policy more "self service". That's also a lovely goal, but have you ever seen an organisation that has effectively implemented product team managed firewall policy editing without some obscene amount of governance? Nope, I haven't.

And going back to the big enterprises, have you ever seen a commercial firewall managed in a CI/CD/Infra as Code fashion with all the changes under source control - like you would a modern/cloud firewall? Try doing that with a 10yr old Juniper (other old firewalls are available!).

Sure, any of these things may ease the pain slightly but why put a plaster on a wound that needs stitches?

Get rid of the centralised internal firewall(s)

This is where I run and hide from the angry network and security folk. But wait, before you impale me with your pitch forks and burn me at the stake, let me try and explain!

A good comparison is the good old Enterprise Service Bus. We've all been there, we've built this massive thing in the middle of our services that routes and translates data between all of our services. It went horribly wrong, and then we went through the process of reducing it down to "smart endpoint and dumb pipes", pushing as many responsibilities as possible to the consumers.

To be clear, what I am not saying is to get rid of the capabilities that the firewall offers, I'm saying to get rid of the centralisation, and push those capabilities to the product teams. Think about other ways to secure communication between microservices, does that traffic always need to go via a firewall?

Lets solve the problem in a different way, whilst simultaneously improving our security stance.

Zero Trust Networks

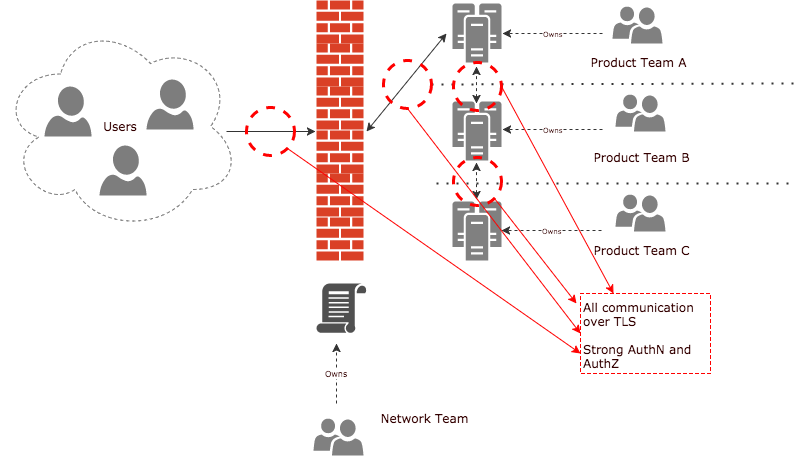

So if every product team is responsible for its own access control, what "zone" are their services in? The answer is their own zone. And lets treat every service in that zone like it is internet facing, even if it isn't.

Treat every service as if it was on the internet

Read about Google BeyondCorp. Network perimeters lure developers into a false sense of security, they treat the firewall as the guardian of their application and become inherently more lax on the service Authentication and Authorisation that they implement. This results in a complete field day for someone who has managed to get access to your zone.

To elaborate a little more, think about what you do when you log onto your internet banking. You use a secure communication channel (TLS), some strong authentication (two factor) linked to your authorisations, we do that because well, "the internet".

Lets just apply the same practices inside our zone too? Mutual TLS is one way to achieve this.

Stronger Auth (Mutual TLS)

Mutual authentication or two-way authentication refers to two parties authenticating each other at the same time, being a default mode of authentication in some protocols (IKE, SSH) and optional in others (TLS).

Mutual TLS authentication or certificate based mutual authentication refers to two parties authenticating each other through verifying the provided digital certificate so that both parties are assured of the others' identity.

If both parties are absolutely sure of each others identity, they can make informed decisions as to if to grant access, and that communication is encrypted. If you have such a setup in place, you have to ask yourself what significant value does having a firewall in the middle of this communication, saying "C can talk to A" (other than a L3 drop).

Typically mTLS has been a bit of a logistical nightmare, managing client certificates and identities for services, revocation, expiration etc. However if you're on the container bandwagon, it's now super easy.

Watch this talk from docker, to learn about how mutual tls is built into swarm, or check out service meshes like istio which run on top of kubernetes to do this for you.

Disseminated network security

As I said earlier, I don't want to get rid of network level security. I just want to remove the centralisation and the facade of security brought on by "low, medium and high" labels.

So rather than trying to group services into those zones - give each product team control of it's own services, zones and network policies. What you end up with is lots of little zones, owned by the product teams, rather than a few monolith zones, owned by one team.

Microwalls. You heard it here first folks.

In summary

I believe a lot of larger organisations have a bit of sunk cost fallacy when it comes to their firewall setup and with that comes the engrained perception of it being the most secure way to operate.

Hopefully the points raised in this article helped to articulate how the same, if not better security can be achieved without the associated centralisation.